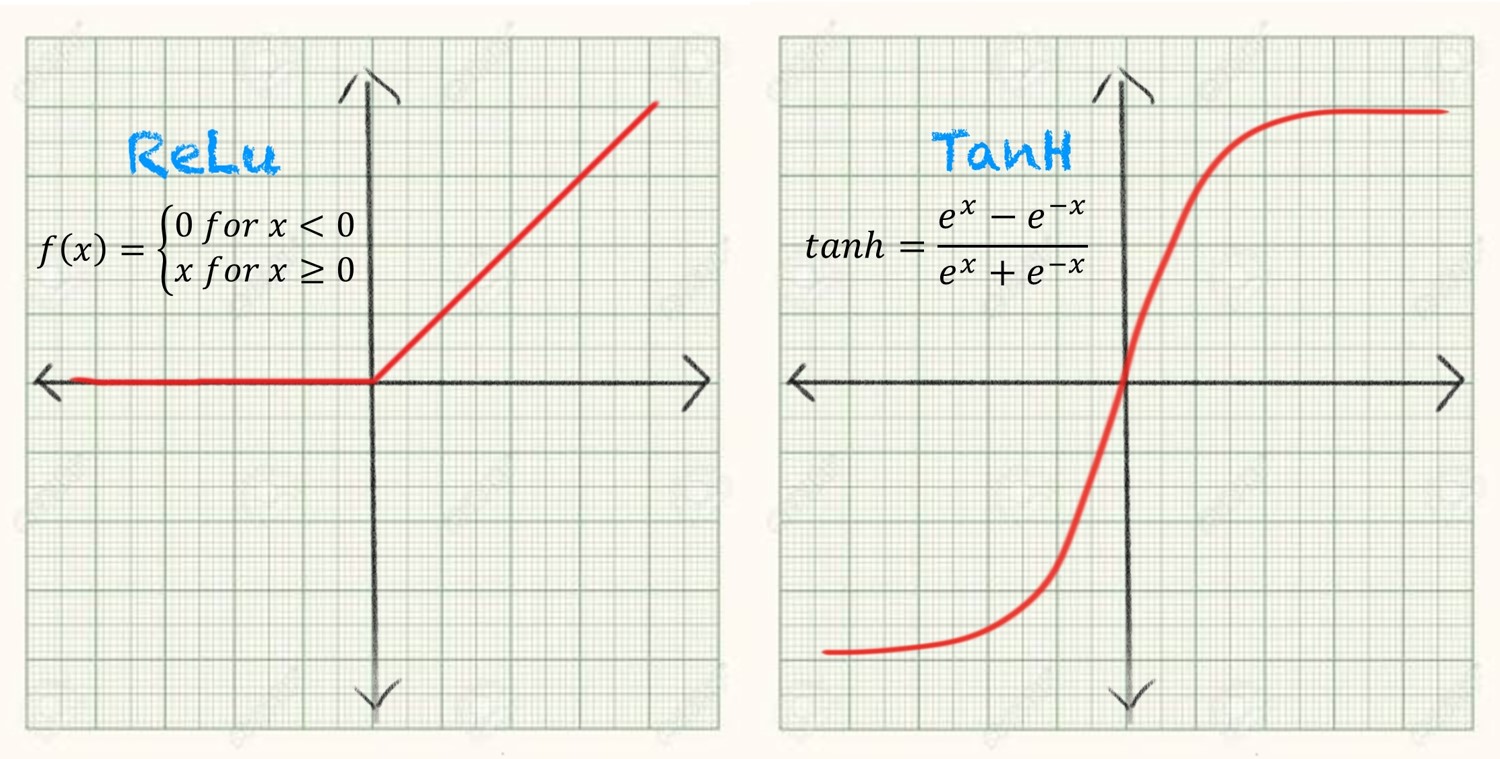

While, the activation function is used after each node, neural networks are designed to use the same activation function for all nodes in a layer.Īctivation functions are used specifically during the calculations of the values for activations in each layer to decide what the activation value should be. Luckily, activation functions can be applied to layers within a model so that a network is made capable of performing multiple tasks. It follows logically that the activation function applied has quite an impact on the capabilities and performance of a neural network. Essentially, the activation function defined how and how well the model learns from training data, and what type of predictions the model can make. In a neural network, the activation function is the function that governs how the weighted sum of the input in a given layer of the network is transformed into output. By using an activation function, a neural network can learn complex, non-linear relationships and make more accurate predictions. Without this, neural networks would only be able to learn linear relationships between the input and output, which would greatly limit their ability to model real-world data. Activation functions are used to introduce non-linearity into the network, which allows the model to learn and represent complex patterns in the data. Why use an activation function?Īctivation functions are an essential component of neural networks, including transformer models. While there will be some graphs and equations, this post will try to explain everything in relatively simple terms. In this post, we will cover several different activation functions, their respective use cases and pros and cons. With the growth of Transformer based models, different variants of activation functions and GLU (gated linear units) have gained popularity. For something often glossed over in tutorials, the choice of activation function can be a make it or break it decision in your neural network setup. In a transformer model, the activation function is used in the self-attention mechanism to determine the importance of each element in the input sequence. β can either be a constant defined prior to the training or a parameter that can trained during the training time.Activation functions play an important role in neural networks, including BERT and other transformers. Where σ(x) = 1/(1+exp(-x)), is the sigmoid function. Similarly for large enough negative values, the value of σ(x) becomes approximately equal to 0, and hence the values of swish function become approximately equal to 0. The reason it looks a lot like ReLU is because for large enough values, σ(x) becomes approximately equal to 1, and hence values of swish activation function become approximately equal to x. But unlike ReLU however it is differentiable at all points and is non-monotonic. The shape of Swish Activation Function looks similar to ReLU, for being unbounded above 0 and bounded below it. Swish Activation Function is continuous at all points. The authors of the research paper first proposing the Swish Activation Function found that it outperforms ReLU and its variants such as Parameterized ReLU(PReLU), Leaky ReLU(LReLU), Softplus, Exponential Linear Unit(ELU), Scaled Exponential Linear Unit(SELU) and Gaussian Error Linear Units(GELU) on a variety of datasets such as the ImageNet and CIFAR Dataset when applied to pre-trained models. Swish is one of the new activation functions which was first proposed in 2017 by using a combination of exhaustive and reinforcement learning-based search. The choice of activation function is very important and can greatly influence the accuracy and training time of a model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed